A data lake is a centralized repository that allows storage of large volumes of structured, semi-structured, and unstructured data in its raw form without a predefined schema. It enables flexible data ingestion from various sources and supports analytics, visualization, and machine learning for valuable insights.

What is a data lake?

A data lake is a repository where you can store both unstructured and structured data. Data lakes allow for the storage of large volumes of structured, semi-structured, and unstructured data — in its native format, at any scale.

The purpose of a data lake is to hold raw data in its original form, without the need for a predefined schema or structure. This means that data lakes can ingest data from a wide variety of sources and store it in a more flexible and cost-effective way.

How does a data lake work?

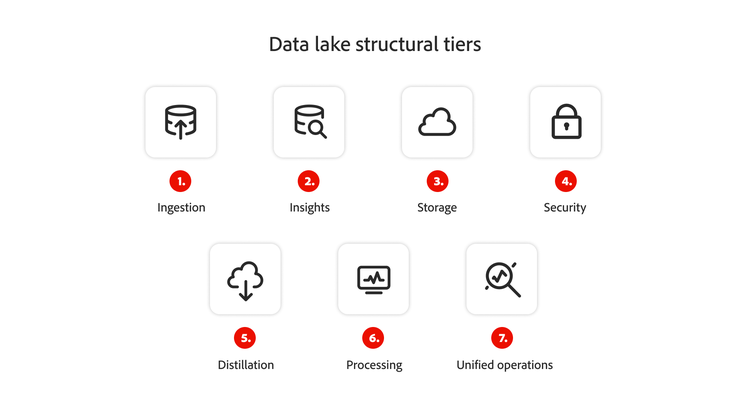

Data lakes ingest and store raw data in its original format. The process typically begins with data ingestion from multiple sources, such as IoT devices, social media feeds, enterprise systems, and databases. This data is then stored in a scalable storage solution, often on cloud-based platforms.

Unlike a data warehouse, data in a data lake remains in its raw, unstructured form until needed. At that point users can process, query, and transform the data into structured formats for various types of analytics, reporting, or visualization. Data lakes also support advanced functions such as machine learning and artificial intelligence by providing a vast pool of raw data to fuel these applications.

Why do you need a data lake?

Businesses in every industry use data to fuel their decision-making process and capitalize on growth opportunities. Using a data lake makes that possible by providing businesses with a reliable location for storing, managing, and analyzing vast amounts of data.

- Big data processing. Data lakes handle massive datasets, from terabytes to petabytes, enabling businesses to process large volumes of structured, semi-structured, and unstructured data. They support distributed computing frameworks, which facilitate scalable data processing and advanced analytics. These are essential for handling big data at scale.

- Unstructured data handling. Data lakes efficiently store unstructured data like videos, audio, images, and text, enabling businesses to analyze raw content. This is especially useful for industries like media, healthcare, and social media, allowing advanced analytics such as sentiment analysis and image recognition on non-tabular data.

- Real-time analytics. Data lakes enable real-time analytics by integrating with tools like Adobe Analytics, allowing businesses to monitor live data and make immediate decisions. This is crucial for industries like ecommerce, finance, and manufacturing, where timely insights and quick decision-making are essential.

By 2030, the global market for data lakes is projected to reach $45.8 billion dollars, according to research published in 2024. In a 2021 survey of IT professionals, 69% said their company had already implemented a data lake — a number that’s likely to have risen.

When do you need a data lake?

- Big data processing. If you have large volumes of data that need to be processed and analyzed, a data lake can provide a scalable and cost-effective solution.

- Unstructured data. If your organization works with unstructured data, such as video, audio, images, and text files, a data lake can be an ideal solution. Data lakes can store data in its raw form, allowing you to run various analytics and artificial intelligence (AI) models to extract insights.

- Real-time data processing. If you need to process data in real time, a data lake can help you capture and process data quickly and build real-time analytics dashboards.

- Cost-effective storage. Data lakes can be a cost-effective way to store large volumes of data. Since data is stored in its raw form, you don’t need to spend time and resources structuring or cleaning it before storing it.

- Collaboration. Lakes also allow you to centralize data from various departments within an organization, making it easier for teams to collaborate and share data. Different stakeholders can access the data, including data analysts, data scientists, and business leaders, allowing them to perform their analyses and make data-driven decisions.

Data lake vs. data warehouse.

The most significant thing to remember is that a data lake ingests data and prepares it later. In contrast, a data warehouse prioritizes organization and structure above all else, just as a physical storehouse or distribution center would.

Think of the function and process of a data lake like rain falling into an actual lake. Any raindrops that hit the lake’s surface are accumulated within the body of water, and the same basic premise applies to a data lake.

Meanwhile, just as an actual warehouse would never accept a disorderly bundle of unpackaged goods or an unscheduled shipment, a data warehouse cannot receive new information unless it has already been prepared and structured.